You can get the strategy right. You can fix the processes. And the whole thing can still fail because nobody addressed what changed for the people.

If you’ve been following this series, you know the thesis: AI doesn’t fix dysfunction; it multiplies it. Article 2 examined the strategy vacuum. Article 3 tackled the execution trap lurking in undocumented processes. This final article takes on the dimension organizations underestimate most: People. Not people as headcounts or a training problem. People as a system, with identity, judgment, fear, and meaning at the center of whether AI adoption takes hold.

From Knowledge Worker to Sense Maker

For over sixty years, the dominant frame for professional work has been Peter Drucker’s “knowledge worker.” People whose primary value lies in creating, analyzing, and applying information. That concept shaped how we designed organizations, built career paths, defined expertise, and decided what to reward.

AI is dissolving that frame. The cognitive tasks that defined knowledge work, including research, analysis, synthesis, pattern recognition, and drafting, are increasingly performed by AI systems that do them faster, at greater scale, and at a fraction of the cost. This does not mean knowledge workers are obsolete. It means the nature of their value is fundamentally shifting.

There is an emerging role, the “Sense Maker,” a worker whose primary value lies not in producing or analyzing information, but in judgment, discernment, and decision-making amid rapid AI-driven change. Sense Makers direct AI, interpret its outputs, and make the consequential calls that require context, ethics, and accountability that no model can provide.

The pattern is familiar if you know labor history. Each transition from the Agrarian Age to the Machine Age to the Information Age did not eliminate human contribution. It redefined where human value sat. We are in that redefinition now. Humans are moving from owning every step of the value chain to serving as strategic bookends: framing problems at the start and validating outcomes at the end, with AI as a self-sufficient collaborator in between.

This is not a training problem. It is an identity problem. When you tell a senior analyst that their value is no longer in building the model but in knowing which questions to ask and whether to trust the output, you are not upgrading a skill. You are asking them to renegotiate their professional identity. That negotiation is deeply human, and it doesn’t happen in a workshop.

The OCM Illusion

Most organizations believe they are addressing the human transformation. They have a change management workstream. They are running communications campaigns. They have scheduled training. They are checking the box.

The box is far too small.

Traditional OCM was designed for defined transitions with a clear before and after. The shift from knowledge worker to sense maker is not that kind of change. There is no stable destination to communicate. The skills required are still being defined. And the deepest barriers to adoption are not informational. They are emotional and cultural: fear of irrelevance, loss of expertise-based status, uncertainty about what “good” looks like in a role that didn’t exist two years ago, and a reasonable suspicion that “AI will make your job better” is corporate language for “AI will make your job disappear.”

When OCM is positioned as a support function within an AI deployment, it is structurally unable to address the cultural transformation the moment requires. You cannot manage your way through a shift of this magnitude. You lead through it.

Supporting Your Shift

Name the shift for what it is. This is a redefinition of where human value sits in the work. The honest conversation sounds like this: the nature of your role is changing, here is how we see it evolving, here is what we don’t know yet, and here is how we are going to figure it out together.

Go beyond OCM to culture transformation. The shift from knowledge worker to sense maker requires changes to how you evaluate performance, how you promote, how you staff projects, and what behaviors you signal as valuable. When the reward system still reflects the old model, no amount of messaging about the new model will be credible.

Invest in the middle. Mid-level professionals are the layer most disrupted and least supported. They are close enough to the work to feel the displacement and senior enough to have built identities around the old model. If you lose them, your AI strategy fails regardless of the technology.

Create genuine agency in the transition. Co-design the new ways of working with the people doing the work. Sense making, the very capability you need your people to develop, starts with giving them a meaningful role in shaping what their work becomes.

The Bottom Line

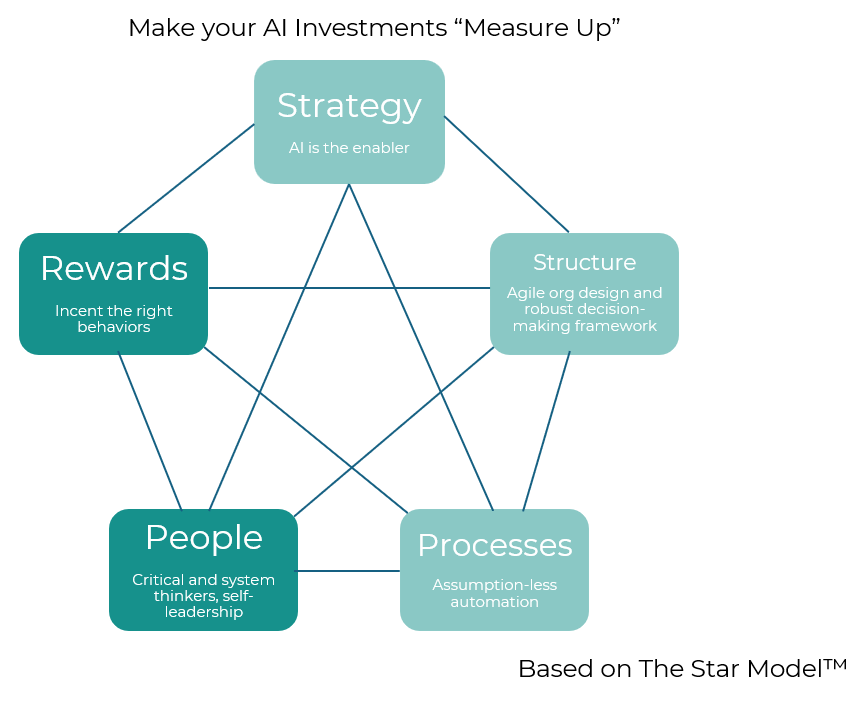

This series has walked through the Galbraith Star Model as a diagnostic for a specific problem: why AI deployments that should work, don’t. The strategy vacuum that produces motion without direction. The process gap between documented fiction and operational reality. And now, the human variable, the fears, identities, and cultural systems that determine whether people engage or withdraw.

The flywheel works when all five dimensions reinforce each other. That alignment is not a precondition you achieve once and move past. It is the ongoing work of leading an organization through a transition with no fixed endpoint. The organizations that thrive will be the ones that build the capacity to evolve with it, not just technologically, but humanly.

AI readiness is people readiness. Everything else is infrastructure.

Does this resonate with you? Unify is here to help. Our consultants are ready to facilitate the conversations that move beyond change management into the culture transformation your AI investments require.

About The Shift Series

Shift Happens is a series exploring how organizations can turn disruption into direction. We write about the real, human side of work, where change, technology, behavior, and leadership collide in ways no framework fully captures.

Every article follows one of the five currents that shape modern work:

The Human Side of Transformation, the heartbeat beneath the strategy.

Change Management as the Missing Discipline, the discipline hiding in plain sight, quietly determining who succeeds.

Technology, Tools + Human Behavior, the space where logic meets instinct, and where most rollouts live or die.

Organizational Structure, Power & Governance, the lines, ladders, and tensions that decide how work truly flows.

Leadership Micro, Shifts, Governance & Operating Models, the small shifts that create disproportionate impact.

We combine lived experience with practical insight. The kind you can apply the same day, not someday.

Shift happens! But with the right mindset, it happens through you.

If your organization is navigating a shift in technology, structure, or culture and needs practical, human, centered support, reach out.

This is the work we love! And the work we do best.