Setting the Stage: Identity, Ego, and the Human Variable

AI doesn’t just change how we work. It changes who we are at work. It’s not a new tool. It’s a mirror. And when it gets it wrong, you take the blame. That’s not just technical risk. It’s identity risk. Trust grows faster when accountability is explicit: who owns the decision, who owns the system, and what happens when it fails?

In Chris Lewis’ “The Human Variable” article, she talked about shifting from “knowledge workers” to “sense makers” and how that shift requires people to rethink their professional identity.

In Ruxi Raduta’s article “The real reason change fails,” she pointed to the idea of ego death. In “Empathy without structure…,” she explained how frameworks help empathy land with the people it is meant to support.

All these ideas point to the same thing. When change happens, the human side of work becomes the real challenge.

We saw this firsthand during our internal AI hackathon. Teams weren’t just building prototypes. They were confronting their roles, relevance, and risk. One participant told us, “I didn’t realize how exposed I’d feel until the AI started making decisions I used to make.” In that moment, innovation and identity collided.

The Missing Ingredient: Trust

Here’s the point that matters most. We need trust.

Most AI efforts do not stall on skill. They stall on suspicion about intent, impact, and what happens when things go wrong.

Trust grows when behavior is consistent enough that people stop bracing for the surprise.

Trust requires leadership intent.

For many people, the first trust question isn’t “Will the model be accurate?” It’s “Will leaders replace me with the thing I build?” Leaders should name that fear and be consistent about the message: using and building AI is about growth. When employees believe they won’t “AI themselves out of work,” they engage, experiment, and learn faster.

So how do we respond when this new mirror reshapes how people see themselves at work? Not with more training modules or governance checklists. We start with trust. But trust doesn’t arrive fully formed. It evolves, and that evolution follows a curve.

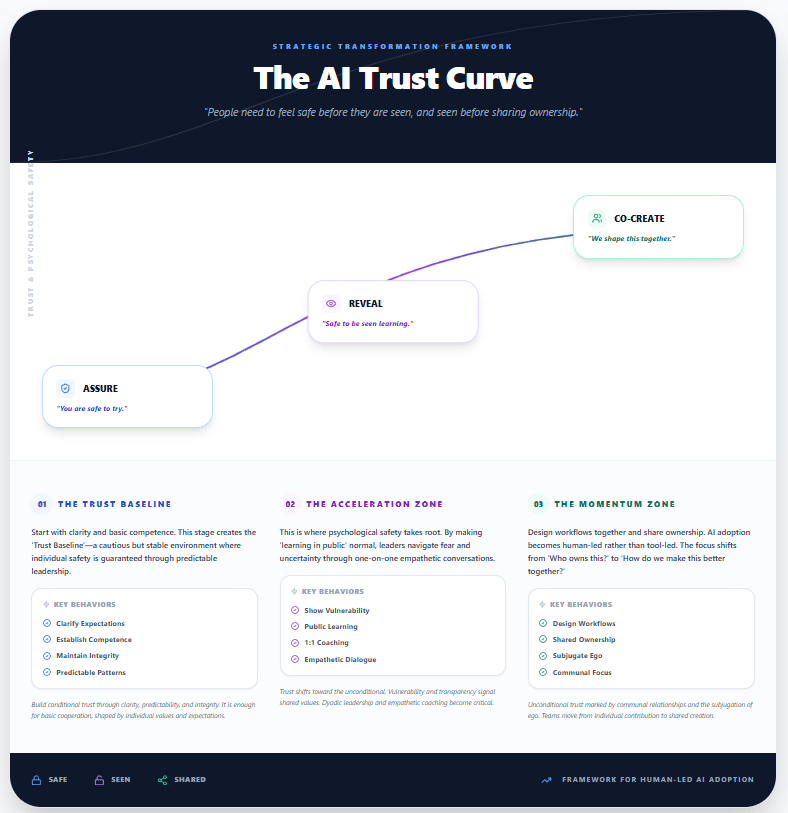

Introducing AI Trust Curve

If we start with the end in mind, it becomes clear: we can’t ask people to co-create if they don’t feel safe. Trust can’t skip steps. People need safety before they can be seen learning, and they need to be seen before they’ll share ownership.

The AI Trust Curve has three stages. Each stage has a few behaviors that help trust grow.

ASSURE (Trust Baseline) “You are safe to try.”

Start with clarity, psychological safety, and basic competence.

This stage builds conditional trust through clarity, predictability, and integrity. It supports basic cooperation, but not transformation. Trust here is cautious, and it’s shaped by individual values, expectations, and day-to-day context.

In the hackathon, leaders set a clear baseline: rules, rewards, and expectations were explicit, and there was no punitive “gotcha.”

-

- Clear rules of engagement (what “good” looked like)

- Leaders available to remove blockers (fast responsiveness)

- One-on-one coaching to navigate uncertainty and confidence

- Explicit message: AI is for growth and capability building, not replacement

That clarity gave people enough safety to start.

REVEAL (Trust Acceleration Zone) “It is safe to be seen learning.”

The method matters. Show vulnerability in a human way. Make learning in public feel normal.

Trust starts to move toward the unconditional. Vulnerability and transparency signal shared values. One-on-one, empathetic conversations become critical, and psychological safety starts to take root.

As work in progress became visible, leadership normalized learning out loud.

-

- One-on-one sessions to surface concerns privately first (psych safety)

- Group conversations to share patterns without shaming individuals

- Questions and roadblocks were treated as progress signals, not interruptions

That’s when people started to feel safe being seen learning.

CO-CREATE (Trust Momentum Zone) “We shape this together.”

Design workflows together, share ownership, and celebrate early wins.

Unconditional trust shows up as communal relationships and real help-seeking. Teams move from compliance to co-ownership. AI adoption becomes human-led, not tool-led.

By the final day, teams shifted from ownership debates to shared improvement.

-

- Cross-team feedback loops (inside and outside their team)

- Collaboration despite competition (helping others win too)

- Shared narrative in the final presentation (problem, cause, solution, benefits, lessons)

That’s trust momentum. The work becomes “ours,” not “mine.”

Everyone Has a Role

Trust doesn’t just come from leaders. Teams and individuals shape it every day in small ways.

I will admit that I only use some of these behaviors some of the time. I am not as intentional as I want to be. You might feel the same way. If you lead people through AI-enabled change, make the implicit explicit: state clearly that AI adoption is about amplifying people, not replacing them. Then back that message up with your decisions.

Trust grows one conversation at a time. Research shows that dyadic (one-on-one) leader-member interactions are more effective than group interventions alone, especially in low-trust environments. Leaders must uncover each person’s “grounded rationality,” their goals, fears, and emotional state, to build real psychological safety.

Hackathons aren’t just innovation engines, they’re trust labs. They compress time, expose identity risk, and demand rapid psychological safety. When designed intentionally, they reveal where trust breaks, and where it builds.

Where are you and your company on the AI Trust Curve: A Quick Self-Assessment

Below is a quick “read-the-room” assessment you can complete in under two minutes.

Use it to compare intent vs. impact: how you think you’re showing up during AI-enabled change versus how others experience your leadership and team norms.

Rate each statement 1–5: 1 = not true yet, 3 = sometimes true, 5 = consistently true.

Scoring: Add your total (6–30). Optional: subtotal each stage (2–10) to see where trust is strongest/weakest.

ASSURE (Trust Baseline)

-

-

- I understand what ‘good’ looks like when we use AI in my role (quality, boundaries, and escalation).

- If an AI-assisted decision goes wrong, accountability is clear, and I won’t be scapegoated for the tool’s failure.

-

REVEAL (Trust Acceleration)

-

-

- It’s safe to admit what I don’t know about AI and ask for help in front of others.

- Work-in-progress (drafts, prototypes, experiments) is treated as learning, not as a performance evaluation.

-

CO-CREATE (Trust Momentum)

-

-

- We co-design AI-enabled workflows with the people who do the work, instead of just rolling tools out to them.

- We celebrate small wins and lessons learned, not just final outcomes.

-

How to read your score (maturity + next moves)

6–13 = ASSURE (Trust Baseline): Trust is fragile or inconsistent. People may be willing to “try,” but they’re protecting themselves from blame or ambiguity.

What To Do Next: make boundaries explicit (what AI can/can’t be used for), clarify escalation paths, and publicly reinforce “no scapegoating” when tools fail.

14–22 = REVEAL (Trust Acceleration): Trust is growing and learning is happening, but it may still depend on who’s in the room or how visible the work is.

What To Do Next: normalize learning in public (show drafts, narrate tradeoffs), reward questions, and replace “gotcha” feedback with coaching conversations.

23–30 = CO-CREATE (Trust Momentum): Trust is strong enough for shared ownership. Teams can experiment quickly and improve systems together without fear.

What To Do Next: co-design workflows with frontline experts, build feedback loops into delivery, and scale what works (playbooks, communities of practice, peer demos).

Use your stage subtotals to find your “trust bottleneck.” A low score in an earlier stage will cap progress in later stages (you can’t skip steps on the curve).

-

- ASSURE subtotal: 2–4 = unclear expectations or fear of blame; 5–7 = baseline clarity but inconsistent follow-through; 8–10 = strong guardrails and psychological safety to try.

Leader challenge: write down “what good looks like” for AI use in your context (quality bar, review steps, red lines) and socialize it; explicitly state what happens when AI is wrong.

- ASSURE subtotal: 2–4 = unclear expectations or fear of blame; 5–7 = baseline clarity but inconsistent follow-through; 8–10 = strong guardrails and psychological safety to try.

-

- REVEAL subtotal: 2–4 = people hide uncertainty; 5–7 = selective vulnerability; 8–10 = learning out loud is normal.

Leader challenge: model vulnerability first (share what you’re learning and where you’re unsure), and protect experiments from being treated like performance ratings.

- REVEAL subtotal: 2–4 = people hide uncertainty; 5–7 = selective vulnerability; 8–10 = learning out loud is normal.

-

- CO-CREATE subtotal: 2–4 = rollout mindset (tools pushed to people); 5–7 = collaboration happens but isn’t systematic; 8–10 = shared ownership with continuous improvement loops.

Leader challenge: move from “adoption” to “design”: bring doers into workflow design, run short pilots, and celebrate lessons learned—not just wins.

- CO-CREATE subtotal: 2–4 = rollout mindset (tools pushed to people); 5–7 = collaboration happens but isn’t systematic; 8–10 = shared ownership with continuous improvement loops.

Pick two moves: choose one action to stabilize trust (from the earliest low-scoring stage) and one action to stretch your leadership (from the next stage up). Repeat the assessment in 30–60 days and look for movement in the stage subtotals—not just the total.

Call to Action

Take the assessment, then compare how you try to build trust with how others experience it.

Above all, be intentional. Be clear about boundaries. Co-design the work. Learn together and remember to celebrate progress.

About The Shift Series

The Shift is a series about how organizations can turn disruption into direction. We explore change management, culture, communication, and technology through the human side of transformation.

Shift happens. With the right mindset, it happens through you.

If your organization is navigating a shift in technology, structure, or culture and needs practical support, reach out.

This is the work we love. And the work we do best.